As I’ve noted in a recent post, I’ve had a problem with my diskless netbooting hosts sometimes needing several boot attempts to come up again.

In this article, I will describe a short setup for virtual machines to debug such a problem. I’ve chosen to do it via virtual machines instead of one of my physical hosts because it makes a lot of things easier. Chief amongst those the fact that with a VM, I’m able to look at the boot process a lot more easily than with a physical host, which are all headless in my setup. It also allows for faster iteration, because most of my physical Homelab hosts are Raspberry Pis and hence a bit on the slower side.

In this post, I will also be advising against the use of VirtualBox, as it produced a lot of problems which did not appear with a QEMU VM.

Network setup

Before I could start setting up the VM, I needed to do some network changes. My desktop, where the VM was supposed to run, was in a different VLAN, and hence in a different broadcast domain than my DNSmasq proxy DHCP server, which handles netbooting in my Homelab. As a consequence, I would not be able to use that server without some changes.

The above diagram shows an approximation of the relevant parts of my home network. There is no access from the Homelab VLAN to my workstation, and my workstation needs to go through the OPNsense router to reach the Homelab.

What I did not want to do was to add my workstation to the Homelab VLAN. That would honestly just feel wrong.

At this point, the switch port my workstation hangs off of strips all packets coming in from the management VLAN of their VLAN header, and tags on the VLAN ID of the management VLAN to all incoming packets from my workstation.

To now run a VM on my Homelab VLAN from that machine, I added the switch port to the VLAN, but did not configure stripping of the VLAN ID for outgoing packets. This meant that the main NIC device on my workstation would never see the packets coming from the Homelab VLAN, because those packets would not be forwarded anywhere by the kernel if there are no devices configured for that VLAN ID. And because the machine sends out packets without VLAN tagging, the switch would add a VLAN header with the management VLAN ID.

So the first thing to do was to create a Linux device which listens for packets with the Homelab VLAN ID. I did it like this:

ip link add link eth0 name eth0.100 type vlan id 100

ip link add name vlanbridge type bridge

ip link set dev vlanbridge up

ip link set eth0.100 master vlanbridge

ip link set dev eth0.100 up

Let me try my hand at another illustration of this setup.

This setup provides a bridge inside the network stack the VM can use like a real

switch. Only packets marked with the Homelab VLAN ID will ever reach the VM,

and all packets the VM sends will be send out via the eth0.100 interface,

meaning they will all get tagged with the VLAN 100 ID. If I’m not mistaken, this

setup should still completely isolate my own OS while also allowing the VM to

be in VLAN 100, the Homelab VLAN.

Setting up VirtualBox

Up to this point, VirtualBox has always been my go-to VM tool for quick and dirty work on my desktop. That has changed now.

When setting up the VM, I chose the network bridge option in VirtualBox’ config. At the beginning, this seemed to be working. I had configured the VM to netboot, and after the aforementioned network re-config, the machine was able to netboot as intended.

But that was about all that worked. When logging into the VM for the first time

via SSH, I already felt a pretty strong lag. Which was ridiculous, considering

that it was running on a pretty potent CPU. I started an apt get upgrade anyway.

It was slow. Incredibly slow. And then it seemed to hang. SSH logins via another

terminal also hung.

After a reboot and a look at the kernel logs, I saw messages like these:

rbd: rbd0: encountered watch error: -107

rbd: rbd0: failed to unwatch: -110

systemd[1]: systemd-journald.service: State 'stop-watchdog' timed out. Killing.

INFO: task kworker/u20:5:8900 blocked for more than 241 seconds.

nfs: server ceph-nfs.mei-home.net not responding, still trying

nfs: server ceph-nfs.mei-home.net OK

Here, rbd0 is my Ceph RBD network attached root volume. I had never before

seen anything like this on any of my netbooting machines. The NFS failures also

indicated some sort of network problem, because none of the other 15 hosts using

the same NFS mount had any similar problems.

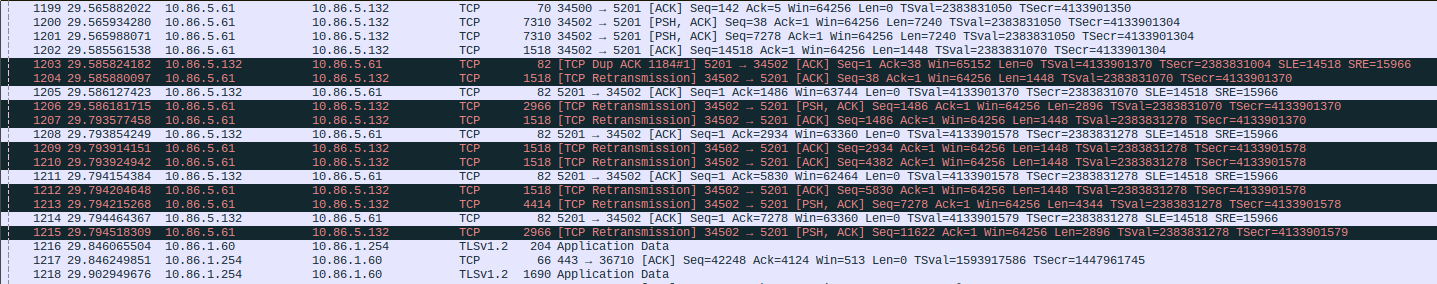

I finally gave up in resignation and conceded that it was Wireshark o’clock. And there it was.

1203 29.585824182 10.86.5.132 10.86.5.61 TCP 82 [TCP Dup ACK 1184#1] 5201 → 34502 [ACK] Seq=1 Ack=38 Win=65152 Len=0 TSval=4133901370 TSecr=2383831004 SLE=14518 SRE=15966

1204 29.585880097 10.86.5.61 10.86.5.132 TCP 1518 [TCP Retransmission] 34502 → 5201 [ACK] Seq=38 Ack=1 Win=64256 Len=1448 TSval=2383831070 TSecr=4133901370

Lots and lots of re-transmissions from my VM to a number of different Ceph hosts, and the VM seeing a lot of what it considered duplicate ACKs coming from the Ceph hosts.

Next, I tried to verify the problem with iperf. In the direction from the VM

to several hosts in my Homelab, I was not able to get beyond 1.5 MBit/s. Way

below my local 1 GBit/s network speed.

Next, I tried the other direction, from several Homelab hosts to the VM. And

that direction worked without any issue at all.

The final straw for VirtualBox came when I ran the same iperf tests from

the host OS, but via the same eth0.100 device. And I got almost the full speed

in both directions.

Side note: I also got a relatively high number of retries shown by iperf here, but they did not seem to impact the performance.

So with that I concluded that maybe VirtualBox was broken in some way for this particular setup.

QEMU

I decided to try it a different way: Using QEMU instead of VirtualBox, just to see whether that worked better. And it did.

For launching the QEMU VM, I adapted this script I had already used when I worked on my initial netboot setup.

I adapted it a little bit, to also integrate the setup and teardown of the necessary networking devices, so I had everything in a neat package. It looks like this now:

#!/bin/bash

# Source: https://gist.github.com/gdamjan/3063636

MEM=4096

LAN=eth0

VLANID=100

VLAN=eth0.$VLANID

VMIF=vmif

BRIDGE=br

ARCH=x86_64 # i386

function start_vm {

/usr/bin/qemu-system-$ARCH \

-enable-kvm \

-m $MEM \

-boot n \

-bios /usr/share/edk2-ovmf/OVMF_CODE.fd \

-smp 4 \

-net nic,macaddr=00:11:22:33:44:55 \

-net tap,ifname=$VMIF,script=no,downscript=no

}

function setup_net {

ip tuntap add dev $VMIF mode tap

ip link add link $LAN name $VLAN type vlan id $VLANID

ip link add name $BRIDGE type bridge

ip link set $VLAN master $BRIDGE

ip link set $VMIF master $BRIDGE

ip link set $BRIDGE up

ip link set $VLAN up

ip link set $VMIF up

}

function reset_net {

ip link set $VMIF down

ip link set $VLAN down

ip link delete $VLAN

ip link delete $BRIDGE

ip tuntap del dev $VMIF mode tap

}

setup_net

start_vm

reset_net

The only addition compared to the setup for VirtualBox is the tuntap interface

for use by the VM, which is also added to the bridge interface.

I also added a hardcoded MAC address to the VM, so that I could use that to configure netbooting for it.

This setup worked without any issue whatsoever and has served me well in further work on my netboot setup.

Conclusion

I still don’t know what was wrong with VirtualBox. I seem to remember that I did not have these kinds of problems during earlier netboot testing.

Sadly, this setup did not really help me to figure out my original netboot problem, because I was not able to reproduce the reboot problem even once on the VM, similar to my reproduction failure on my test Pi.

But the setup was still worth it, as it served me well during the implementation of better logging for the netboot process for my netbooting hosts, which I will detail in the next post.

If any of you have any idea about what the VirtualBox problem might have been, or you have a good SOP for investigating those retries iperf was showing me between my workstation and my Homelab hosts, please don’t hesitate and contact me on Mastodon.