Wherein I talk about migrating from Cilium’s L2 announcements for LoadBalancer

services to BGP.

This is an addendum to the third part of my k8s migration series.

BGP instead of L2 announcements?

In the last post, I described my setup to make LoadBalancer type services

functional in my k8s Homelab with Cilium’s L2 Announcements

feature. While working on the next part of my Homelab, introducing Ingress with

Traefik, I ran into the issue that the source IP is not necessarily preserved

during in-cluster routing.

By default, packets which arrive on a node which doesn’t have a pod of the target service are forwarded to a node which has such a pod. During that forwarding, source NAT is applied to the packet, overwriting the source IP with the IP of the node where it originally arrived. This is also described in the Kubernetes docs.

This is true for both, NodePort and LoadBalancer services. I see this as a

problem specifically for Ingress proxies, as it prevents stuff like IP allow lists

and any other IP dependent functionality in the proxy. All packets would look

like they’re coming from a cluster node. With Cilium’s L2 announcements, they

would all have the source IP of the node which is currently announcing the

service.

This can be fixed with a config option on Kubernetes services, namely

externalTrafficPolicy: Local. This has the effect that packets are not

forwarded to another node if the one they arrive on doesn’t have a pod of the

target service. The default mode is Cluster, where packets are forwarded to

other nodes, but with the downside of sNAT.

Now, at some point, while reading into L2 announcements and the externalTrafficPolicy

option, I read that the Local setting doesn’t work properly with the ARP based

L2 announcements.

But now, I can’t find that anywhere anymore. 😔

This was my main trigger, but there are a couple of additional downsides of the L2 announcements feature. First, it produces a lot of load on the kube-apiserver. I went into a bit of detail in my previous post.

Then there’s the fact that with the L2 announcements feature, there’s also no

real load balancing. Due to how ARP works, there can only ever be one node which

announces the service IP, and so only that node will ever receive traffic for

that service.

Combined with what I previously wrote, this also means that if you want to have

a service with preserved source IPs and multiple pods, you’re out of luck. With

externalTrafficPolicy: Local, packets will never be forwarded to another node’s

pod, regardless of how many there are. The current announcer will have to carry

all of the load, and any other pods on other nodes will only ever be idle.

To be entirely honest, that’s not going to be too much of a problem in my Homelab. I’m currently running exactly no jobs with more than one replica. But hey, who knows? At some point, my writing might really take off and I might need three instances serving my blog. 😉

BGP

So instead of the ARP based L2 announcements, it’s now going to be Cilium’s beta BGP control plane feature.

I really don’t know enough about the protocol, so I’m not going to annoy you with my 1 day old half-knowledge here.

Suffice it to say that with BGP, routers can exchange routes, mostly telling their peers which networks they can reach.

In the Kubernetes LoadBalancer application, Cilium will announce routes to

the individual LoadBalancer service IPs through a group of cluster nodes.

A route announcement could look like this:

10.86.55.1/32 via 10.86.5.206

That would tell the peer that it can reach the service IP 10.86.55.1/32 via

the Kubernetes host 10.86.5.206. Here, the 10.86.5.206 host is hanging off

of a switch directly connected to my router, so the router already knows how

to reach it. With the above announcement, it now also knows to forward packets

targeted at 10.86.55.1 to 10.86.5.206, where Cilium will then forward it to

a pod of the target service.

One of the advantages over the Layer 2 ARP protocol used by L2 announcements is that a completely different, non-routable subnet can be used for the service IPs.

There are two parts to the setup, one is configuring the router and the other is configuring Cilium.

One thing to decide on before continuing is the Autonomous System Number.

This number is an identifier for autonomous networks. Similar to IPs, there is

a range of ASNs for private usage which will never be handed out to the public

Internet. It is the range 64512–65534. For more infos, have a look at the ASN

table in the Wikipedia.

While you can use different ASNs for the router and Cilium, it is not necessary,

and I will continue with the same ASN, 64555, for both.

Router setup

The first step to using BGP is setting it up on the router. I’m using OPNsense here and will describe the setup. If you’re using a different router, you can adapt the instructions.

Generic instructions

To setup BGP in the router, you need a piece of software which listens on port

179 by default, receiving route announcements from peers and sending route

announcements to them.

OPNsense uses a plugin which installs FRRouting,

which can also be used standalone if you are for example running a Linux host

as a router.

Once you’ve enabled BGP, you will need to add all the k8s nodes you would like to participate in BGP as peers to the router. At least in OPNsense, this means simply adding the node’s routable IP and the Cilium ASN as the node’s ASN.

One very important point that cost me quite some time: Don’t forget to make sure

that the Kubernetes cluster nodes participating in BGP can actually reach port

179/TCP on your router. I spend quite a while trying to figure out why my

router and Cilium won’t peer. 😑

OPNsense configuration

For OPNsense, the first step is to go to System -> Firmware -> Plugins

and install the os-frr plugin, which is OPNsense’s way to install FRROuting.

Once that’s done, a new top level menu entry called Routing will appear.

Note: This is not the System -> Routes menu!

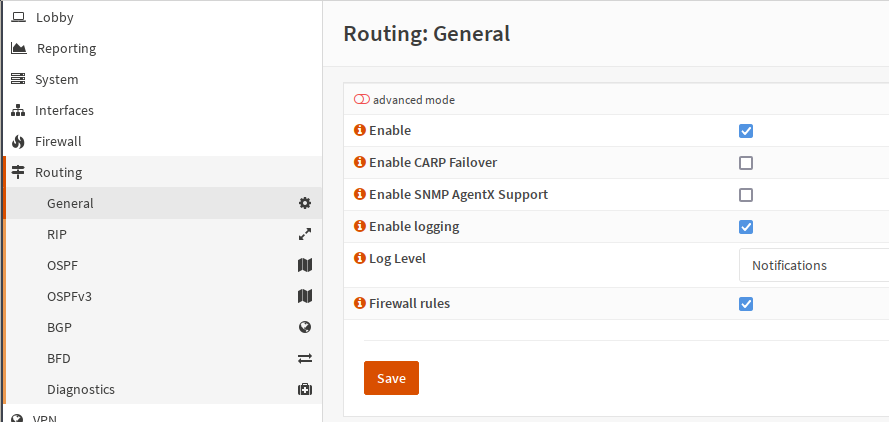

Then, enable the general routing functionality, which starts the necessary daemons:

Screenshot of the Routing -> General UI.

Hit Save after you’ve checked Enable.

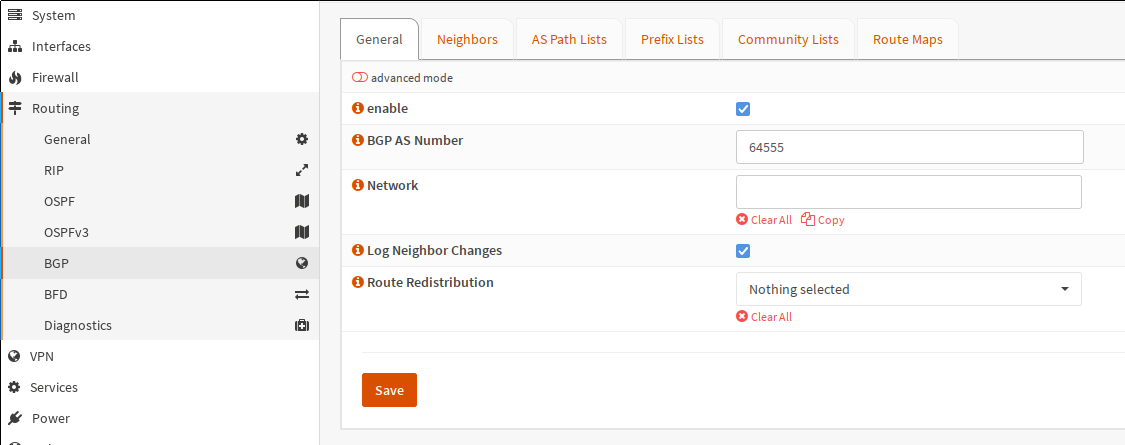

Next, go to BGP and also check enable. Under BGP AS Number, enter the ASN

you chose from the private range.

As I don’t need OPNsense redistributing any routes, I’ve left the Route Redistribution

drop-down at Nothing selected. I’ve left the Network field empty for the

same reason.

My config for the BGP -> General config.

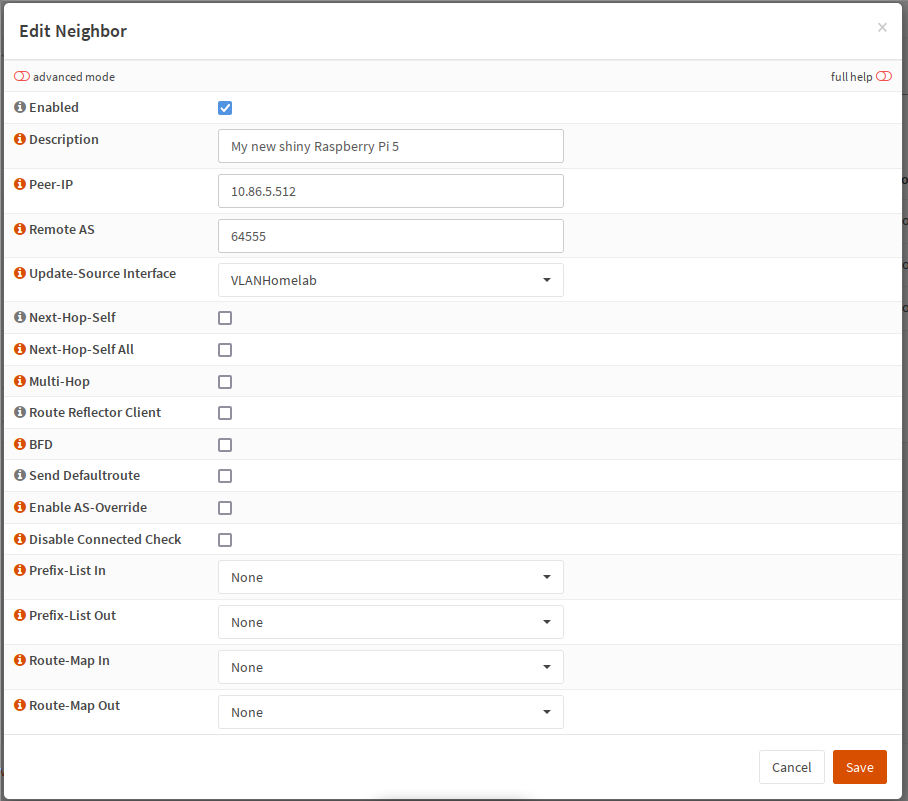

The next step is adding the neighbors. For each of the Kubernetes hosts which

should announce routes, click on the + in the bottom right corner of the

BGP -> Neighbors tab and enter the following information:

- A description so you know which host it is. I’m just using the hostname

- Under

Peer-IP, add the IP of the Kubernetes host - Under

Remote AS, enter the ASN you chose from the private range - Under

Update-Source Interface, set the interface from which the Kubernetes host is reachable

I left all the checkboxes unchecked, and did not set anything in the

Prefix-List or Route-Map fields:

Example entry for a new neighbor.

Here I’ve got a question to my readers: Isn’t there a better way than adding every single Kubernetes worker host as a peer here? It just feels like unnecessary manual work, but I didn’t find any other info on it.

With all of that done, the router config is complete.

As noted above, don’t forget to open port 179/TCP on your firewall!

Addendum 2024-02-04

I encountered an error later, when I really started using the Cilium LB. I’ve described it in this post.

In short, if you have a situation like this:

- LoadBalancer service setup as described in this post

- Host in the same subnet as your Kubernetes nodes trying to use LoadBalancer service

- LoadBalancer IPs assigned with different subnet than those hosts

You will end up with asymmetric routing. Your packets from the host accessing the service will go through OPNsense, as the packets need to be routed. But the return path of the packets will be direct, as the k8s nodes and the host using the service are in the same subnet.

You will then need to do the following:

- Switch the “State Type” for all rules allowing access from the subnet to the LoadBalancer IPs to “sloppy state”, as OPNsense will only ever see one side of a connection attempt and consequently block the connection

- Create an OUTGOING firewall rule which allows the k8s subnet to access the LoadBalancer IP as well as an INCOMING rule. I’m not sure why this works right now, but it seems to be necessary, at least in my setup.

Cilium Setup

The documentation for the Cilium BGP feature can be found here.

The first step of the setup is enabling the BGP functionality. As I’m using

Helm to deploy Cilium, I’m adding this option to my values.yaml file:

bgpControlPlane:

enabled: true

Similar to the L2 announcement, the BGP functionality needs something which

hands out IP addresses to the LoadBalancer services. This can be done with

Cilium’s Load Balancer IPAM.

As I’ve noted above, because BGP in contrast to L2 ARP announces routes, it is

easier to chose a CIDR which does not overlap with the subnet the Kubernetes nodes

are located in. In my case, the CiliumLoadBalancerIPPool looks like this:

apiVersion: "cilium.io/v2alpha1"

kind: CiliumLoadBalancerIPPool

metadata:

name: cilium-lb-ipam

namespace: kube-system

spec:

cidrs:

- cidr: "10.86.55.0/24"

I’ve chosen only a single /24, as I don’t expect to ever reach 254 LoadBalancer

services. Most of my services will run through my Traefik Ingress instead of being

directly exposed.

The second part of the Cilium config is the BGP peering policy. It sets up the details of how to peer, what to announce and with whom the peering should happen.

For me, it looks like this:

apiVersion: "cilium.io/v2alpha1"

kind: CiliumBGPPeeringPolicy

metadata:

name: worker-node-bgp

namespace: kube-system

spec:

nodeSelector:

matchLabels:

homelab/role: worker

virtualRouters:

- localASN: 64555

exportPodCIDR: false

serviceSelector:

matchLabels:

homelab/public-service: "true"

neighbors:

- peerAddress: '10.86.5.254/32'

peerASN: 64555

eBGPMultihopTTL: 10

connectRetryTimeSeconds: 120

holdTimeSeconds: 90

keepAliveTimeSeconds: 30

gracefulRestart:

enabled: true

restartTimeSeconds: 120

A couple of things to note: There can be multiple neighbors that Cilium peers

with. In my case though, I’ve only got the one OPNsense router, which is

reachable under 10.86.5.254 from the Kubernetes nodes. I’m using the same ASN

as I used for the router’s BGP setup, 64555. I didn’t see any reason for why

I should have different ASNs.

The nodeSelector ensures that only my worker nodes announce routes.

Important to note is also the serviceSelector. A missing serviceSelector is

notably not an error. It just means that Cilium won’t announce any routes

for LoadBalancer services.

If you’d like to, you can also have Cilium announce routes to the actual pods,

by setting exportPodCIDR to true.

Running Example

With my current k8s Homelab, I have configured my three worker nodes as neighbors in OPNsense. I’ve also got the following service running for my Ingress:

apiVersion: v1

kind: Service

metadata:

annotations:

external-dns.alpha.kubernetes.io/hostname: ingress-k8s.mei-home.net

labels:

homelab/part-of: traefik-ingress

homelab/public-service: "true"

name: traefik-ingress

namespace: traefik-ingress

spec:

externalTrafficPolicy: Local

ports:

- name: secureweb

nodePort: 31512

port: 443

protocol: TCP

targetPort: secureweb

type: LoadBalancer

This is a simplified version of the service the Traefik Helm chart automatically

creates for me.

Important here are the type: LoadBalancer and the externalTrafficPolicy: Local

settings.

It currently has the following IP:

kubectl get -n traefik-ingress service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

traefik-ingress LoadBalancer 10.7.122.207 10.86.55.5 443:31512/TCP 32h

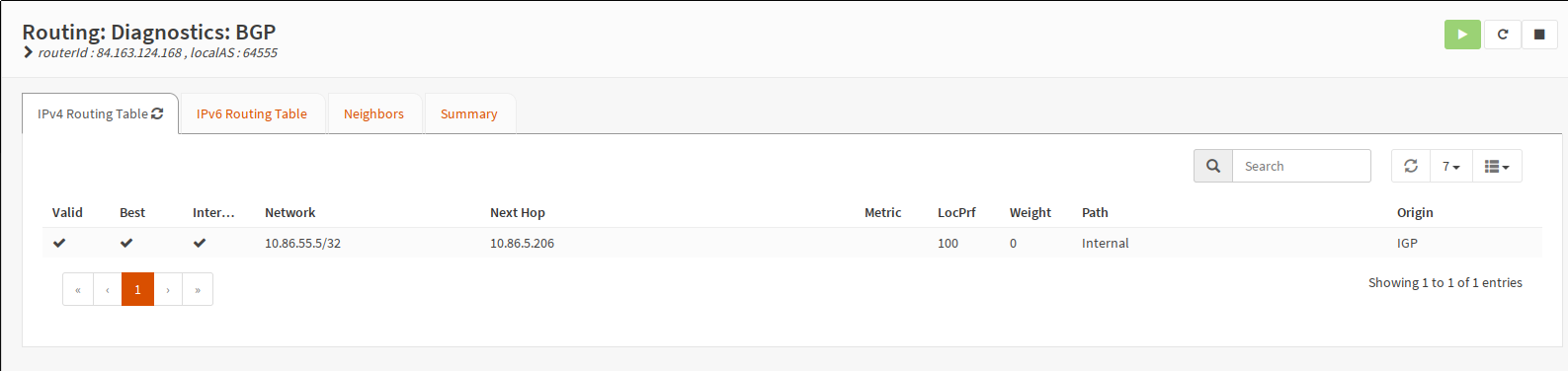

And here is the culmination of this entire article:

Example routing table

So there we are. There’s only one Traefik pod running for the moment. And it’s

running on the node with the IP 10.86.5.206. As I said in the beginning,

with externalTrafficPolicy: Local, only the nodes which host pods of a given

service announce routes to themselves. This prevents intra-cluster routing and

preserves the source IP.

I also had a trial with externalTrafficPolicy: Cluster, and in that case all

three of my current cluster nodes announce the service IP to OPNsense.

Finally, another request to my readers: Do you have a favorite book about networking? I was initially completely lost (and as you see from my explanation of BGP, still mostly am) reading about BGP and even ARP when I was working on the L2 announcement. It’s the one big glaring hole in my Homelab knowledge. Took me ages to get started on VLANs as well, for example.

So if you’ve got a favorite book about current important networking tech and protocols, drop me a note on the Fediverse.