This is the third part of my k8s migration series.

This time, I will be talking about using Cilium as the load balancer for my Kubernetes cluster with L2 announcements.

But Why?

A couple of days ago, I was working on setting up my Traefik ingress for the

cluster. While doing so, I yet again had to do a couple of things that just felt

weird and hacky. The most prominent of those was using hostPort a lot when

setting up the pod.

In addition, I would also pin the Traefik pod to a specific host and provide a DNS entry for that host, all hardcoded.

All of this has a couple of downsides. First, if that ingress host running

Traefik is down, so is my entire cluster, at least as seen from the outside.

Furthermore, using hostPort and a fixed host also has a problem with the

RollingUpdate strategy. Because the ports and the host are fixed, Kubernetes

cannot start a fresh pod before the old pod has been killed.

More generally speaking, there’s also the fact that most examples and tutorials,

as well as most Helm chart defaults assume that LoadBalancer type services

work.

And with what?

Initially, I looked at two potential load balancer implementations. These were kube-vip and MetalLB. I was initially leaning towards kube-vip, if for no other reason than that I had kube-vip already running on my control plane nodes, providing the VIP for the k8s API endpoint.

But while researching, I found out that newer versions of Cilium also had load balancer functionality. Reading through it, it sounded like it had all the features I wanted. Its biggest advantage is the simple fact that it doesn’t need me to install any additional components into the Kubernetes cluster. It’s just a couple of configuration changes in Cilium, plus two more maninfests.

Interlude: Migrating the Cilium install to Helm

Before I started, I decided to change my Cilium install approach. Up to now, I had Cilium installed via the Cilium CLI, as described in their Quick Start Guide.

There is one pretty big downside in this approach in my mind: It’s manual invocations of a tool, with a specific set of parameters. It’s also not simple to put under version control properly. Sure, I could always create a bash script which contains the entire invocation with the right parameter, but that’s just not too nice.

So instead of having to document somewhere with which command line parameters I needed to invoke the Cilium CLI, I switched it all over to Helm and Helmfile, so now it’s treated like everything else in the cluster.

The migration was pretty painless, because in the background, the Cilium CLI already just calls Helm.

So for the migration, I first needed to get the translation of the command line parameters into the Helm values for my running install. That can be done with Helm like this:

helm get values cilium -n kube-system -o yaml

I then put those values into a values.yaml file for use with Helmfile.

The Helmfile config looks like this:

repositories:

- name: cilium

url: https://helm.cilium.io/

releases:

- name: cilium

chart: cilium/cilium

version: v1.14.5

namespace: kube-system

values:

- ./value-files/cilium.yaml

The cilium.yaml values file looks like this:

cluster:

name: my-cluster

encryption:

enabled: true

type: wireguard

ipam:

mode: cluster-pool

operator:

clusterPoolIPv4PodCIDRList: 10.8.0.0/16

k8sServiceHost: api.k8s.example.com

k8sServicePort: 6443

kubeProxyReplacement: strict

operator:

replicas: 1

serviceAccounts:

cilium:

name: cilium

operator:

name: cilium-operator

tunnel: vxlan

With this config, there’s no redeployment necessary, it is equivalent to what the Cilium CLI does.

Cilium L2 announcements setup

Cilium (and load balancers in general, it seems) have two modes for announcing IPs of services. The more complex one is the BGP mode. In this mode, Cilium would announce routes to the exposed services. This needs an environment where BGP is configured. I decided to skip this approach, as my network knowledge in general isn’t that great. I’ve only got a relatively hazy idea what the BGP protocol even does.

So I settled on the simpler approach, L2 Announcements. In this approach, all Cilium nodes in the cluster take part in a leader election for each of the services which should be exposed and receive a virtual IP. The node which wins the election then answers any ARP requests asking for the MAC address of the node with the service virtual IP. The node then regularly renews a lease in Kubernetes to signal to all other nodes in the cluster that it’s still there. If a lease isn’t renewed in a certain time frame, another node takes over the ARP announcements.

One consequence of this approach is the fact that this is not true load balancing. All traffic for a given service will always arrive at one specific node. From the documentation, this is different when using the BGP approach, as that approach does provide true load balancing. But what the L2 announcements approach does provide is fail over, and this is all that I really care about for my setup, at least for now.

Cilium config

The first step in enabling L2 announcements is to enable the Helm option:

l2announcements:

enabled: true

Once that was done, I had the problem that nothing seemed to happen at all.

It turns out that the Helm options are written into a ConfigMap in the Cilium

Helm chart, which is then read by the Cilium pods. And the pods are not

restarted automatically. So to get the option to take any effect, I had to

run the following two commands after deploying the updated Helm chart:

kubectl rollout restart -n kube-system deployment cilium-operator

kubectl rollout restart daemonset -n kube-system cilium

Then the option was active. You can see the active options in the log output

of the cilium-operator and cilium pods if you ever want to check what the

pods are actually running with.

If anybody out there has any idea what I might have done wrong, needing those

manual rollout restart calls, please ping me on Mastodon.

But still, nothing happens just from enabling the option. There are two manifests which need to be deployed.

Load balancer IP pools

First, a CiliumLoadBalancerIPPool manifest needs to be deployed. This manifest

controls the pools of IPs which are handed out to LoadBalancer type services.

In my setup, the manifest looks something like this:

apiVersion: "cilium.io/v2alpha1"

kind: CiliumLoadBalancerIPPool

metadata:

name: cilium-lb-ipam

namespace: kube-system

spec:

cidrs:

- cidr: "10.86.5.80/28"

It defines a relatively small IP range, as I don’t expect to expose too many services. Most of what I will expose will run through the ingress service. Documentation on the pools and additional options can be found here.

L2 announcement policies

The second piece of config is the configuration for which services should get

an IP and which nodes should do the L2 announcements. This is done via a

CiliumL2AnnouncementPolicy manifest, which is documented here.

For me, the config looks like this:

apiVersion: "cilium.io/v2alpha1"

kind: CiliumL2AnnouncementPolicy

metadata:

name: cilium-lb-all-services

namespace: kube-system

spec:

nodeSelector:

matchLabels:

homelab/role: worker

serviceSelector:

matchLabels:

homelab/public-service: "true"

loadBalancerIPs: true

This restricts the announcements to only happen from my worker nodes, not from the control plane or Ceph nodes.

In addition, I’m adding a serviceSelector here, so that only certain services

get an IP and are announced. This is necessary due to this bug.

The bug leads to all services being considered for L2 announcements, regardless

of whether they are of type LoadBalancer or not. This doesn’t make much

sense at all, and also costs performance, which I will go into in a later section.

Example

With all of that config done, let’s have a look at an example. I used the following deployment:

apiVersion: apps/v1

kind: Deployment

metadata:

name: testsetup

spec:

replicas: 1

selector:

matchLabels:

app: testsetup

template:

metadata:

labels:

app: testsetup

spec:

containers:

- name: echo-server

image: jmalloc/echo-server

ports:

- name: http-port

containerPort: 8080

This is just a simple echo server which returns a bit of information on the HTTP request it received. Then this is the service for exposing that pod:

apiVersion: v1

kind: Service

metadata:

name: testsetup-service

labels:

homelab/public-service: "true"

annotations:

external-dns.alpha.kubernetes.io/hostname: testsetup.example.com

spec:

type: LoadBalancer

selector:

app: testsetup

ports:

- name: http-port

protocol: TCP

port: 80

targetPort: http-port

As noted above, only services with the homelab/public-service="true" label

are handled by the Cilium L2 announcements. In addition, I’m supplying the

service with an external-dns hostname to get an automated DNS entry.

In short, any requests which reach the service IP on port 80 are forwarded

to port 8080 in the pod, which is where the echo-server is listening.

One very important thing to note: Use curl for testing! Ping won’t work,

as the service IP does not answer to ping.

When starting to debug, first check whether the service got an IP assigned:

kubectl get -n testsetup service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

testsetup-service LoadBalancer 10.7.174.128 10.86.5.93 80:32206/TCP 14h

The important part here is the EXTERNAL_IP.

Next, check whether there is a Kubernetes lease created by anyone , signaling

that the node is announcing the service:

kubectl get -n kube-system leases.coordination.k8s.io

NAME HOLDER AGE

cilium-l2announce-testsetup-testsetup-service sehith 13h

You can also use arping to check whether there’s anyone announcing the IP:

arping 10.86.5.93

58 bytes from 00:16:3e:17:a4:31 (10.86.5.93): index=0 time=253.747 usec

Important to note: arping will only work from within the same subnet, as ARP

is a layer 2 protocol. Ask me how much time I spend trying to figure out why

I didn’t get an answer on an arping from a separate subnet. 😉

Performance

One last point I’ve got to bring up is the efficiency of Cilium’s L2 load balancer approach.

As noted, this bug made

Cilium announce every service in my cluster initially, type=LoadBalancer or

not.

This produced quite a high load increase on one of my control plane nodes:

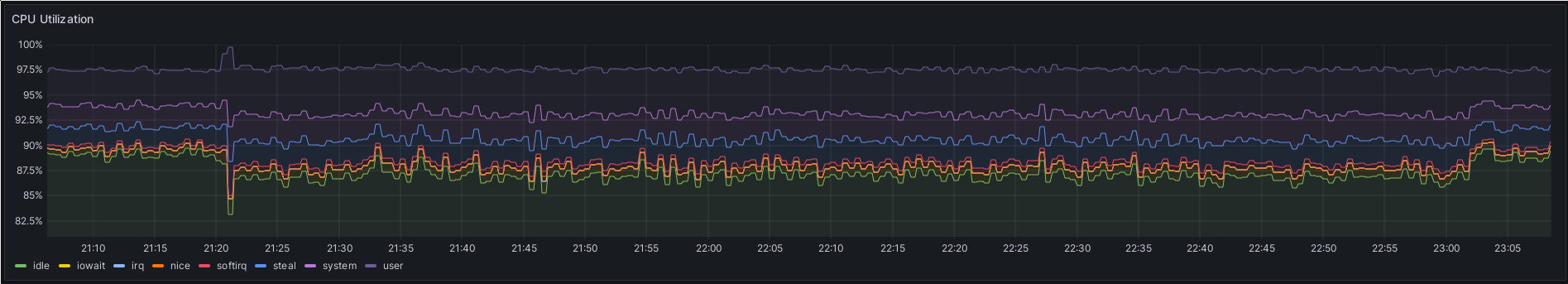

L2 announcements were enabled for the first time around 21:20. At around 23:03, I reduced the L2 announcements to a single service, instead of 5.

The CPU load on this 4 core control node was increased by about 2% during the time where Cilium had to announce the 5 services I had defined in my cluster. This is most likely all API server/etcd load, as Cilium uses Kubernetes' leases functionality. For every L2 announcement, all nodes continuously check whether the current lease holder is still holding the lease, so that another node can take over if the one which previously did the announcement for the service failed for some reason.

This 2% load increase was from only five services with three nodes in the cluster. My cluster will very likely end up with 9 worker nodes in the end, and possibly more than 5 services. I really don’t like where that might lead.

I will have to keep my eye on this while I migrate more hosts and services over from Nomad. If it gets too bad, I will have to return to this topic and try out MetalLB, or potentially go ahead and have a look at BGP after all.