In a previous post, I had noted that due to HashiCorp’s recent decisions about the licensing for their tools, I was thinking about switching away from Nomad as my workload scheduler.

Since then, HashiCorp made a change to the Terraform registry’s Terms of Service which only allowed usage with HashiCorp Terraform. This was obviously an action against OpenTOFU, and it reeked of pure spite. That turned my musings about the future of my Homelab from “okay, this leaves a bad taste” to “Okay, I just lost all trust in HashiCorp”. So Kubernetes it is.

Just to make one thing clear: Both Nomad and Consul, which I will be replacing here, worked great for me. They provided everything I could have wished for, in a rather lightweight package. And the integration was excellent. I also think the documentation for all HashiCorp tools deserves a lot of praise. There’s no technical reason to replace Nomad and Consul. It’s purely due to the license change, and even more so due to the ToS change which followed.

After some experimentation with Kubernetes, I’m satisfied that it’s going to work for everything which I’m currently doing with Nomad, and I’ve spend the last few weeks on making a plan to migrate.

My one main goal here is to make the migration as incremental as possible. To me, this has the advantage of reducing the pressure, because I can just migrate service-by-service, slowly, at any pace which fits the rest of my life.

To this end, I intend to run my Nomad and Kubernetes clusters in parallel. The one big problem with this: Depending on time and motivation, this might draw out the migration quite a bit. I might still be running two workload schedulers come spring 2024. 😅

The current situation

Let’s start with the current state. The current state of my Homelab.

I’m running most of the Homelab on Raspberry Pi 4s. Three of them with 4GB RAM serve as controllers, hosting one Vault, Nomad and Consul server as well as Ceph MON daemons each, for high availability purposes. My main workhorses are the eight Pi CM4 with 8GB, each hosting a Consul and Nomad client running my workloads. Storage is provided by three x86 machines, each with one HDD and one SSD.

Nomad is the main workload scheduler. Consul provides both, service discovery and authenticated as well as encrypted connections between different Nomad jobs. Vault is used for secrets, not only within Nomad jobs but also for example by my Ansible playbooks.

Ceph provides storage to Nomad jobs via CSI as well as the root disks for the eight worker Pis, which netboot and are completely diskless.

At the time of writing, 70% of the cluster’s CPU and 46% of the RAM are assigned to jobs. But in reality, the cluster overall is about 90% CPU idle. All of this together currently eats about 150W.

The Plan

As noted, I would like to do the migration incrementally, keeping everything up as much as possible. The first challenge in that was more hardware to run the two clusters in parallel. Luckily, I’ve got my old x86 machine from before I ventured into multi-host territory. It is an Intel 8C/16T CPU with 64 GB of RAM and a couple 500 GB SSDs. That’s more than enough power to run my entire Homelab, if necessary. In addition, I’ve got my spare disks for when one of the prod disks fails, a 2TB SSD and a 6GB HDD.

I already used the x86 machine as the host for my Kubernetes experiments and will now use it in a similar way, with LXD VMs running three Kubernetes controllers, one Ceph host for using the disks and a couple worker VMs.

Preparation

To begin with, I will create the aforementioned VMs and init the cluster itself. After that, I will migrate the first host. This will also double as a test to see whether everything works fine on Raspberry Pis, and I will also be writing an Ansible playbook to remove all the Nomad cluster’s tools from a host.

Once that’s done, the first couple of services will be foundational stuff, like

external-dns and external-secrets. Then the first migrated Pi will become

the Ingress host with a Traefik deployment.

Ceph

I will continue using Ceph. It has served me very well in the past two years and I know my way around it by now. But instead of continuing with the current baremetal cluster, I will go with Ceph Rook, a Ceph cluster deployed in Kubernetes. This approach will have the advantage that I will be able to use the Ceph hosts also for other workloads than Ceph.

Sadly, Ceph Rook does not support any kind of import from a baremetal cluster. There is no way to create daemons in Rook and join them into a baremetal cluster. As a consequence of that, I will be setting up a fresh cluster in Kubernetes, and then slowly migrate the data over as I migrate hosts and services from Nomad to Kubernetes. Luckily, my cluster is still empty enough that I can take one host out of the baremetal cluster and add it, and its disks, to the Rook cluster, so that I will have one VM using my spare disks and one of the baremetal hosts in the Rook cluster, and the other two baremetal hosts will stay in the baremetal cluster.

For the data transfer, I will very likely just use rsync, as the export/import

doesn’t make much sense especially for CSI volumes, as they will be created and

maintained by Rook/Kubernetes, so importing them as whole volumes would need

even more config to make sure the volume request gets the existing volume.

For the setup itself, I will need to create a number of StorageClasses. There will be two for RBD volumes, the main volume type for my CSI volumes. One will be SSD, one HDD, depending on which kind of performance is needed by a given service. Then there will also be a CephFS class, for those few cases where I need multiple writer capabilities. The same goes for the S3 StorageClass. These two only get HDD variants, as I don’t expect high throughput requirements here anyway.

S3 content

After the Ceph Rook cluster is set up, the first data to be migrated will be

all the S3 buckets which are not directly related to a specific service. These

are mostly my restic backups and some misc stuff, like the Terraform bucket.

Migrating the Logging setup

This is going to be the first actual migration. Because I don’t care too much about my previous logs, I will simply create a completely new setup and not bother to transfer the S3 bucket with my logs.

The setup will be similar to my current Nomad setup. Loki will do log storage,

which will be accessed via Grafana. Then comes my FluentD instance, which

aggregates the logs and unifies them, e.g. making sure there is only one level

for “info”, instead of INF, info and I. That instance will push logs to

Loki. I will also redirect all my logs, meaning syslogs from hosts and service

logs from Fluentbit, to this k8s instance and then retire Loki/FluentD from my

Nomad setup.

As said, should be relatively simple because I don’t care about preserving past logs.

At this point, the k8s cluster will be running the logging setup for the entire Homelab. So it will have become load-bearing.

Setting up metrics gathering

This part is a bit more complicated because I won’t be migrating my old metrics stack with Prometheus and Grafana over 1:1. Instead, I will start using the kube-prometheus-stack. Here I do want to preserve old data, as I like looking at older metrics as well as current ones. This showed the first challenge during planning: In Nomad, volumes are created separately from the main job. For Kubernetes, I will be using Helm as my “job” management tool. My current idea for cases where I want to migrate data over is to do the first deployment of the Helm chart with zero replicas for the pod, thus just creating everything else including volumes.

Another interesting difference is going to be Grafana. From everything I understand now, Grafana’s Helm chart relies on provisioning for things like data sources, dashboards and the like. And in principle, I like the idea of having my dashboards in Git. But it remains to be seen how much exporting them to Git after every change starts annoying me.

On the positive side: Lots more data and pretty graphs about Kubernetes to look at. 😄

The idea here is similar to the logging section: I will retire my Nomad setup and let the k8s Prometheus instance do all the scraping for the Homelab.

One open question is going to be about the CSI volume utilization data. At the

moment, I’m running a cronjob on all workers which regularly reports the

results of a filtered df -h via the local node-exporter’s textfile feature.

Setting up a docker registry

At the moment, I run two Docker registries. One for my own Docker images, and one as a pull-through cache for DockerHub. I will be trying out Harbor to see how I like it.

Backups

My backup setup currently consists of some simple Python scripting driving

restic, which does incremental backups of all locally mounted volumes every

night to my Ceph S3. This doesn’t get me more redundancy, as the S3 is stored

on the same disks as the (mostly) Ceph RBD volumes used with CSI. But it does

protect me from fat-fingered rm -rf / commands. I will go into more detail

about what I’m doing exactly in a separate post when I find the time.

In addition, I’ve got a second job which downloads all of the backup S3 buckets

onto an external HDD via rclone.

No off-site backup yet. 😬

This part will likely require at least a limited rewrite of my Python scripting. Due to the fact that the backups run per node and backup whatever happens to run there at the time the backup job runs, I will be able to continue running the per-node backup job on both clusters in parallel, as they will be backing up different service’s data to different backup S3 buckets.

Service migration

With all of the previous sections, all of the infrastructure is now in place

and I can begin migrating the services.

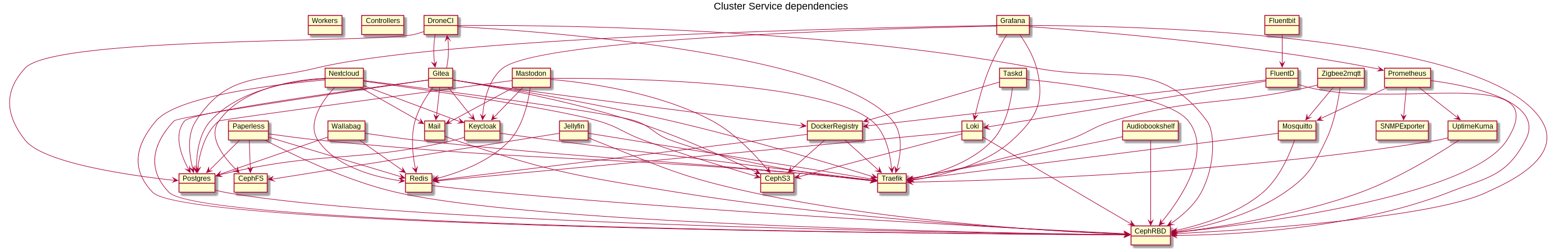

Here is an overview: An overview of my services and their dependencies.

The first service to be created will be Postgres. Here, I decided to go with a proposal from Rachel, cloudnative-pg. I will then migrate each databases when I migrate the service using it, via importing/exporting.

After that will come Audiobookshelf. This service will serve as a testbed for

service migration, and I will write up some documentation on service migration

and create a Helm chart template for the rest of the migrations.

After that I don’t expect many surprises. Where available, I will use the official Helm chart for a service. Otherwise, I will be writing my own. Each individual migration will consist of deploying the Helm chart first with zero replicas, to create e.g. S3 buckets or CSI volumes. Then I will migrate databases, volume and S3 data and then start up the k8s instance with Ingress via my Traefik.

Somewhere in the middle of all this, I will also have to update my host update Ansible playbook, to properly fence off the Kubernetes hosts before rebooting them.

The one service which I thought might be problematic is Drone CI. By default,

its runner runs CI pipelines in Docker. From what I’ve read, I might be able to

setup Docker-in-Docker pods and run there. But quite honestly, the Docker runner

requires mounting in the Docker socket, giving the runner root access, and I

had planned to migrate to Woodpecker anyway. So I will just do this as part

of the Kubernetes migration, as Drone CI’s Kubernetes runner is still marked

experimental, while Woodpecker’s isn’t.

Cleanup

The final step, after all workers are in Kubernetes, will be to migrate the three Raspberry Pi 4 controller nodes over to serve the Kubernetes cluster. This will be a bit complicated. I can shut down the Nomad cluster completely once the last job is done, but the Consul cluster is different.

There are two things which rely on it: First, proper scraping of the Ceph cluster MGR daemon for metrics. Here Consul’s healthcheck connected DNS is currently used to find the active MGR instance. Second, access to my three Vault servers requires Consul for high availability. Here, I’m still not sure how I will solve this. I might possibly just migrate the Vault cluster into Kubernetes as well.

Once the last few bits of data are cleared from the baremetal Ceph cluster, I can finally migrate over the two baremetal servers to the Ceph Rook cluster. To begin with, I will have them restricted to Ceph pods, but I will also test what happens when I remove the “Ceph” taint I currently plan to put onto them. But to make that decision, I will have to look more deeply into how Kubernetes scheduling and especially preemption works.

The final act of the migration will be updating all docs (🤞), removing Nomad/Consul setups from my Ansible playbooks and finally shutting down the VMs and retiring the x86 host again.

For this entire migration, to make sure I do not forget anything, I have also created no less than 698 tasks in my favorite task management software, Taskwarrior. I’m accepting bets on how many tasks in I will have to nuke the plan and start fresh. 😉